A story about a novice SRE's experience attending a HashiCorp Meetup

table of contents

- 1 ■ Bullet points of impressions

- 2 ■ HashiCorp Products: Active in AI and analytical platforms!

- 3 ■ HashiCorp software that supports container infrastructure

- 3.1 Lollipop! Managed Cloud

- 3.2 Why develop and use your own?

- 3.3 Overall Features

- 3.4 Terraform

- 3.5 Consul

- 3.6 vault

- 3.7 PKI RootCA distribution issue

- 3.8 Using Console Template 2

- 3.9 Application Token Distribution Issues

- 3.10 Solution to the Application Token distribution problem

- 3.11 Vault Token expired and caused a problem

- 3.12 Impressions

- 4 ■Terraform/packer supports applibot's DevOps

- 5 ■tfnotify - Show Terraform execution plan beautifully on GitHub

- 6 About dwango's use of HashiCorp OSS

I'm Teraoka, an infrastructure engineer.

Within the company, I belong to the SRE team and am responsible for the entire process of infrastructure design, construction, and operation.

Incidentally, SRE generally stands for "Site Reliability Engineering," but

because it means "we look at the entire service, not just the site."

, we call it "Service Reliability Engineering"

This time, I'm going to talk about attending a HashiCorp Meetup.

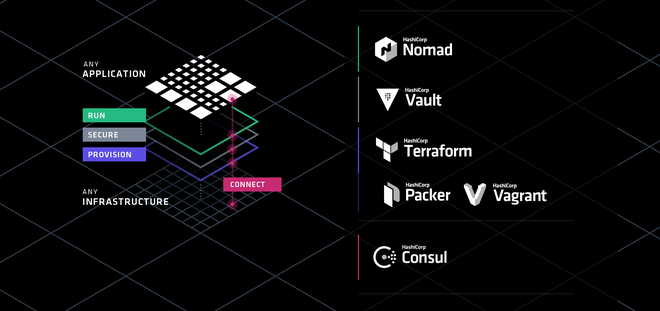

about the HashiCorp suite of tools that are currently the talk of the town for supporting DevOps

It was a short trip to Tokyo, as the venue was Shibuya Hikarie, where we learned

It takes about 3 hours by Shinkansen from Osaka, so it's quite far...

I'm secretly hoping that they'll hold one in Osaka next time!

■ Bullet points of impressions

- Prosciutto and beer are the best

- Mitchell Hashimoto loves puns (he gave away chopsticks as a gift in reference to HashiCorp)

- Approximately 80% of people use Terraform

- Packers account for approximately 40%

- It seems like not many people are using Cousul/vault/nomad yet

- I'm interested in Consul Connect (which can encrypt communication between services)

- I would like to try using sentinel (Configuration as Code), so I hope it will be released as open source!

DeNA provided us with prosciutto and beer, which were delicious

We also received a whole leg of prosciutto as a gift! pic.twitter.com/gkvdEB20zK

— HashiCorp Japan (@hashicorpjp) September 12, 2018

I found Mitchell Hashimoto's puns hilarious.

I was impressed that he actually followed through on his promise and handed out chopsticks at the venue.

From a technical standpoint, Terraform's adoption rate is phenomenal.

When asked, "Has anyone used it?", almost everyone raised their hand.

I'm only about halfway through learning Packer, but

it's a best practice tool for managing machine images,

I personally think

At our company, we only use Terraform/Packer for a limited number of projects, so

I thought we should start by modularizing and standardizing our code to create a more efficient system, and

then begin testing the adoption of Consul for the tools we haven't yet implemented.

So, I'll write about what the speakers presented.

■ HashiCorp Products: Active in AI and analytical platforms!

DeNA Co., Ltd. System Headquarters AI Systems Department Mr. Hidenori Matsuki

- Responsible for the AI & analytics infrastructure.

- Building and operating an infrastructure that allows AI R&D engineers to easily and freely develop.

- Solving challenges in the AI & analytics infrastructure using HashiCorp tools.

AI Infrastructure

The AI project consists of multiple small projects involving 2-3 people.

Each project uses AWS and GCP to handle highly sensitive data and massive amounts of data,

requiring the rapid setup of platform-specific infrastructure.

Resolving AI infrastructure issues

- Use of Terraform

- Terraform is used as an infrastructure provisioning tool

- Instances are launched using Terraform, and middleware is installed using itamae

- Instances can now be easily built using tools

- Machine learning infrastructure is built by launching GPU-equipped instances

- Proposed use of Packer

- We are moving forward with Dockerizing our AI infrastructure

- We want to use Packer to manage it in an AWS/GCP Docker repository

- We also want to write Packer in HCL (I understood this so well I couldn't help but nod in agreement...)

Analytics Infrastructure

- Collect service logs with fluentd

- Analyze the collected logs with Hadoop

- Import the results into BigQuery with embulk

- Visualize the results from Argus Dashboard

- Upgrading Hadoop is a pain...

- Even verifying the analysis infrastructure is difficult in the first place.

Resolving analytical infrastructure issues

- I'm going to try testing the analytics platform with Vagrant!

- But it's really difficult... (Apparently, this is a remaining issue)

Impressions

- I thought the multi-provider nature of Terraform was a perfect fit for the requirements.

- We all agreed on not using Terraform's provisioner (although we use Ansible instead of Itamae).

- I completely agreed with the point about wanting to write Packer in HCL.

■ HashiCorp software that supports container infrastructure

Mr. Tomohiro Oda, Principal Engineer, Technology Infrastructure Team, Technology Department, GMO Pepabo, Inc

Lollipop! Managed Cloud

- Container-based PaaS

- Cloud with operational support, similar to a cloud-oriented rental server

- Dynamically scales according to container load

- Does not use Kubernetes or Docker

- Uses a proprietary container environment called Oohori/FastContainer/Haconiwa

- Everything is custom-developed (developed in Golang)

Why develop and use your own?

- Providing an inexpensive container environment requires a high density of containers.

- User-managed containers need to be continuously secure.

- Container resources and permissions need to be configurable and proactive (the containers themselves dynamically change resources). -

In short, it was necessary to meet the requirements!

Overall Features

- Hybrid environment of OpenStack and Baremetal

- Minimal images created with Packer, shared across all roles

- Uses Knife-Zero instead of Terraform Provisioner -

Development environment is Vagrant

- Strategy to abandon Immutable Infra due to frequent major specification changes and many stateless roles

Terraform

- We try to reuse modules as much as possible.

- tfstate is still managed in S3

. - Workspaces are used for Production and Staging

. - Setting up lifecycles is essential for accident prevention.

- fmt is used in CI.

Consul

- The main function is service discovery.

Roles are divided with Mackerel depending on whether it's external monitoring or service monitoring.

- Name resolution is done using Consul's internal DNS and Unbound.

- It is also used as the storage backend for Vault

. - All nodes have ConsulAgent/Prometheus's Consul/node/blackbox exporter installed

. - Multi-stage SSH becomes convenient by using Consul for name resolution on the jump server. -

Upstream proxy can be round-robined using Consul DNS.

vault

- Utilizes PKI and Teansit secrets

- All sensitive information stored in the database is encrypted with Vault

- Issued sensitive information is distributed with Chef

- Tokens are used while extending the TTL - Tokens

expire after the max-lease-ttl deadline

PKI RootCA distribution issue

How to establish a TLS connection to a Vault server

: • Using Consul as storage automatically configures vault.service.consul for the active Vault.

• Vault is configured as a certificate authority, and you manually issue the server certificate.

• Vault is sealed upon restart.

Using Console Template 2

- Reload server certificates in vault with a SIGHUP signal

- SIGHUP does not seal

- Can also be used for logrotate in audit logs

- Root CA issued by vault is distributed via Chef

Application Token Distribution Issues

- The application authenticates with the Vault using an approve.

- Upon authentication, a token with a specified TTL is issued

. - The application uses the token to communicate with the Vault.

- It is meaningless for the application to have the approve's role_id and secret_id.

- The token is issued outside the application process.

Solution to the Application Token distribution problem

- Execute a command to perform approve authentication when deploying the application

. - The command POSTs an extension of the TTL of the authentication password, secret_id.

- Then, perform authentication and obtain a token.

- Place the obtained token in a path that the application can access.

Vault Token expired and caused a problem

- The secret_id of Approle is set as a custom secret_id to renew it

. - The token set for custom secret_id is issued with auth/token

. - Each mounted secret has a setting called max-lease-ttl, and there is also a max ttl for the entire system

. - If both are not set, the upper limit is 32 days, which is the system's max ttl.

- When the token expired, 403 errors frequently occurred in audit.log.

- Monitoring of audit.log was added to Consul checks on the Vault server.

- Detected issues are notified to Slack by Consul Alert.

Impressions

- It's impressive that they're developing their own solution without using Kubernetes or Docker.

- Many companies don't use Terraform's Provisioner and instead use other configuration management tools.

- Using tfstate to define the backend and manage it with S3 is the most stable approach. -

I don't usually use lifecycle descriptions, so I realized I need to learn them.

- I'm also working on a project involving multi-stage SSH from a jump host, so I'd like to try using Consul

. - To be honest, I don't really understand vault... I need to study it more and relearn it.

■Terraform/packer supports applibot's DevOps

Applibot, Inc. (CyberAgent Group)

System Operations Engineer, Planning & Development Division,

- Teams are structured as separate companies for each application.

- There are only two SYSOPs (people who prepare the server environment)!

- SYSOPs often collaborate with the application team's server engineers.

- A story about how we solved past problems using Terraform/Packer.

Case 1: Image creation

- Uses RUNDECK/Ansible/Packer

- Creates machine images in conjunction with Ansible

- Benefits: Allows you to template the image creation process

Before:

Launching an EC2 instance from an AMI

, applying configuration changes with Ansible

, and deleting the server from which the image was obtained

—all performed manually.

- After

packer build command: one click

Case 02 Load test construction

- Uses Terraform/Hubot

- SYSOP is responsible for setting up, modifying, and reviewing the environment for load testing.

- Infrastructure setup

: - Ultimately, an environment equivalent

to the production environment - Created at the time of testing

- Creating it each time a test is conducted because maintaining it at the same scale as production is costly

- Keep it small when not in use, even during the testing period -

Servers used for load testing

- Jmeter is used, with separate accounts for the network and the server.

- Master-slave configuration. Slaves are scaled using Auto Scaling

- Created each time a test is conducted, deleted when not in use

- Various monitoring tools

- Grafana/Prometheus/Kibana + Elasticsearch

Before:

・Manually using awscli for all managed instances

・Adjusting the startup order with a script

・The secret recipe is created

・Scaling up and down every morning and evening on the sysop side

- After

: All components are now Terraform-based

. Existing resources are handled with Terraform import

. If consistency issues arise, tfstate is directly modified

. Startup management is centralized.

The configuration can be understood by looking at the Terraform code

. Creation and scaling can now be done with Terraform apply

. The configuration order is specified with depend_on.

Infrastructure scaling is done by switching vars

. Everything can now be handled solely through Slack notifications.

Case 3: Building a new environment

・Use terraform/gitlab

Before:

・Sealing the root account

・Consolidated Billing settings

・Creating IAM users, groups, and switches

・Creating an S3 bucket for CloudTrail/logs

・Network settings and opening monitoring ports

- After

- Account settings can now be managed

- Root account sealed

- Consolidated Billing settings

- IAM user created for Terraform

- Terraform execution is OK

- Configuration changes can also be managed with Merge Request

・It is important to create templates for routine tasks!!

Impressions

- Creating machine images with Packer + Ansible is really convenient

. - You can create machine images with Packer while simultaneously managing the configuration of commands to be executed with Ansible.

- In addition to Ansible, Packer can also execute shell scripts

. - We also use Terraform to build load testing environments, so this was helpful.

- We haven't used Hubot for chat notifications yet, so I'd like to try that.

- It seems like all the processes required when building a new environment are modularized, so this was very helpful.

■tfnotify - Show Terraform execution plan beautifully on GitHub

b4b4r07, Software Engineer at Mercari, Inc

What is tfnotify?

- A Go-based CLI tool

that parses the execution results of Terraform and sends notifications to a specified recipient. -

Used by piping, such as `terraform apply | tfnotify apply`.

Why is it necessary?

- We use Terraform in the Microservices domain. -

From an ownership perspective, review merges should be done by each Microservices team, but there are cases where we want the Platform team to review them as well.

- We want the Platform/Microservices team to understand the importance of IaC, code using Terraform, and make it a habit to review the execution plan every time.

- We want to avoid the hassle of going to the CI screen (we want to check it quickly on GitHub).

Hashicorp tools on Mercari

- There are over 70 Microservices

. - Terraform is used to build the infrastructure for all Microservices and their Platforms.

- Developers are encouraged to practice IaC

(Infrastructure as Code). - There are 140 tfstates

. - All Terraform code is managed in a single central repository.

Repository Configuration

- Separate directories for each MicroService

- Separate tfstate files for each Service

- Separate files for each Resource

- Delegate permissions using CODEOWNERS

Delegation of authority

- GitHub features

- Allows merging to be disabled until approved by the person listed in CODEOWNERS

- Prevents unauthorized changes

Implementing tfnotify

- Uses io.TeeReader

- Terraform execution results are structured with a custom parser

- POST messages can be written using Go templates

- Configuration is stored in YAML

Impressions

- I was wondering how to manage code with Git while using Terraform in a central repository, so this was helpful.

- It would be even better if tfnotify supported GitLab CI and ChatWork.

- It's important to have developers practice IaC, and it's important to carefully check the Terraform execution plan every time.

- I want to try using GitHub's CODEOWNERS feature (I want to migrate to GitHub first...).

About dwango's use of HashiCorp OSS

Mr. Eigo Suzuki, Dwango Co., Ltd., Second Service Development Division, Dwango Cloud Service Department,

Consulting Section, Second Product Development Department, First Section

dwango's infrastructure

VMware vSphere

, AWS

, bare metal

, and some Azure/OpenStack applications.

HashiCorp Tools at dwango

- Used for creating Packer

and Vagrant boxes.

- Also used for creating AMIs due to the increase in AWS environments.

Consul

Used as

. - Rewrites application configuration files when the MySQL server goes down.

- Used as a KVS and health check.

- Used as a dynamic inventory for Ansible playbooks

Terraform

Used for configuration management of AWS environments using

. - Used in Nico Nico Survey, friends.nico, NicoNare, and parts of N-Yobiko.

CI with Terraform (Tools Used)

・github

・Jenkins

・Terraform

・tfenv

CI (flow) with Terraform

1. A pull request is submitted.

2. In Jenkins, plan -> apply -> destroy the pr_test environment.

3. If step 2 passes, plan -> apply to the sandbox environment.

4. If all passes, merge the changes.

The good things about CI with Terraform

- You can see on GitHub whether the apply command succeeded or failed

. - The environment is configured as dev/prod, so you can confirm in advance that the apply command can be executed safely.

- tfenv makes it easy to check when Terraform itself is being updated.

CD with Terraform

- We want to automatically deploy to development, etc., once CI passes.

- We've made it a job using Jenkins to ensure it can be done securely.

- Deployments can now be done without any problems regardless of who performs the task.

- Results are notified via Slack, making it easy to check.

Impressions

- Packer is still the most convenient tool for creating AMIs.

- We use Ansible at our company, so I'd like to try using Consul as a dynamic inventory.

- Switching between tfenv versions is convenient.

- Terraform sometimes fails to apply even if the plan passes, so deploying to a pr_test environment is a good approach. -

Jenkins is still the best for continuous deployment (CD). It's easier and more reliable to run it as a job than to execute commands directly.

...That's a summary, mostly in bullet points.

We at our company would also like to actively use HashiCorp's tools to implement IaC!

0

0