[AWS/Athena] How to create a table using partition projection for WAF log analysis

table of contents

Introduction

Hello!

It's Mikoto, and it's been a year since I started working as an infrastructure engineer in July. It's been four months since I officially entered my second year, and I'm almost at the doorstep of my third year.

I recently tried to analyze WAF logs using Athena partition projection , so I decided to write an article about it.

*Please note that this article is not suitable for those who are unfamiliar with how to use Athena or what a table is

What is Amazon Athena?

This is not about the Greek goddess of wisdom and war!

Amazon Athena a query service that allows you to directly analyze data stored in S3 .

For example, even if you have enabled log output to S3 using Elastic Load Balancer, Cloud Front, WAF, etc., when you want to identify the number of requests per minute or a specific access source IP address from a huge amount of logs, it can be a hassle to download a text file and analyze it locally, right?

In such cases, if you use Amazon Athena, you can analyze logs simply by

running queries on the console If you would like to know more, please read the documentation

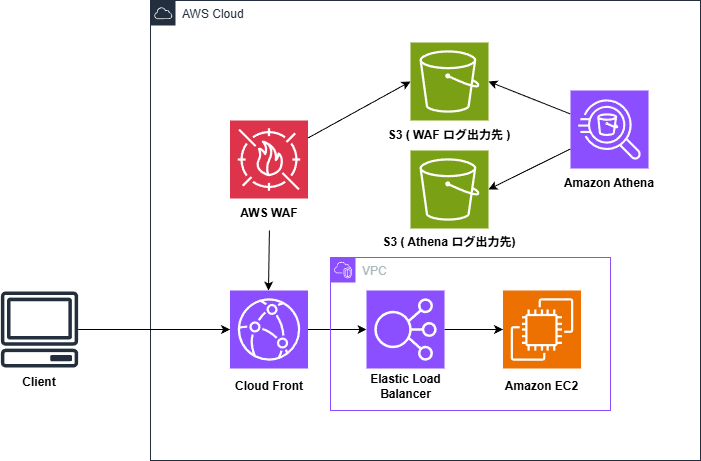

About the execution environment

This time, the query is executed with the following configuration.

Please note that

specified later will change

specified later will change

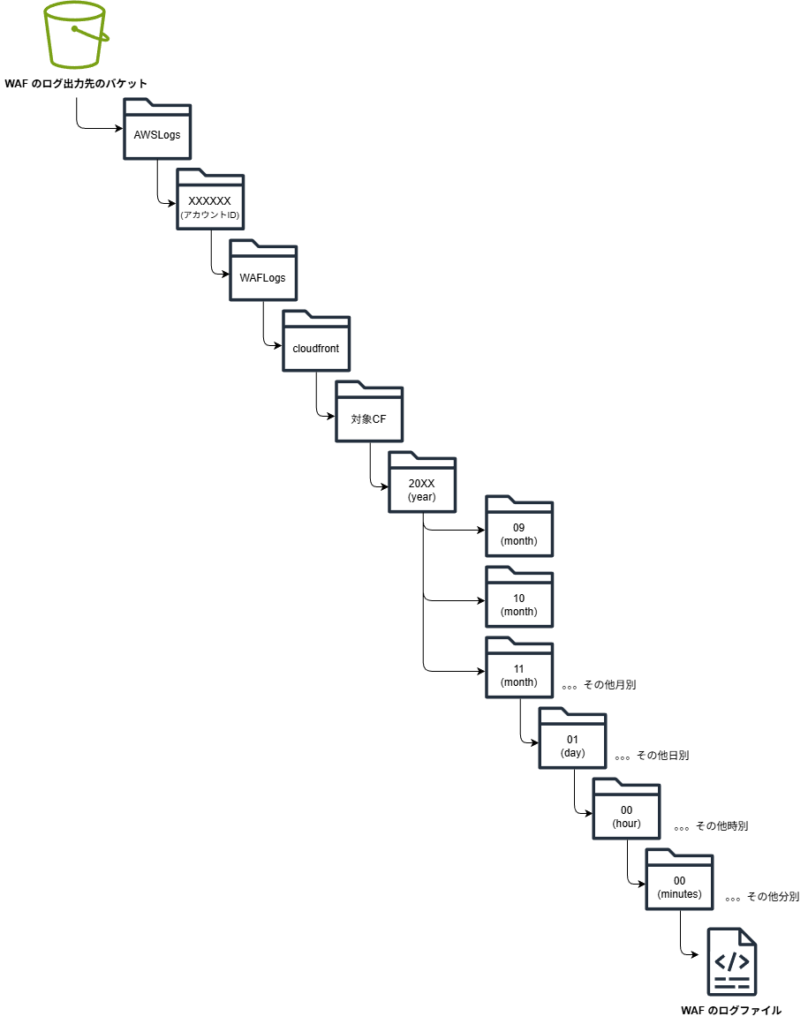

The S3 hierarchy used is as follows:

Creating a table

This time , we will create a table using

this As mentioned above, CloudFront is in the front row, so the S3 path includes /cloudfront.

CREATE EXTERNAL TABLE `waf_logs_partition_projection`( `timestamp` bigint, `formatversion` int, `webaclid` string, `terminatingruleid` string, `terminatingruletype` string, `action` string, `terminatingrulematchdetails` array<struct<conditiontype:string,sensitivitylevel:string,location:string,matcheddata:array<string>>>, `httpsourcename` string, `httpsourceid` string, `rulegrouplist` array<struct<rulegroupid:string,terminatingrule:struct<ruleid:string,action:string,rulematchdetails:array<struct<conditiontype:string,sensitivitylevel:string,location:string,matcheddata:array<string>>>>,nonterminatingmatchingrules:array<struct<ruleid:string,action:string,overriddenactio n:string,rulematchdetails:array<struct<conditiontype:string,sensitivitylevel:string,location:string,matcheddata:array<string>>>,challengeresponse:struct<responsecode:string,solvetimestamp:string>,captcharesponse:struct<responsecode:string,solvetimestamp:string>>>,excludedrules:string>>, `ratebasedrulelist` array<struct<ratebasedruleid:string,limitkey:string,maxrateallowed:int>>, `nonterminatingmatchingrules` array<struct<ruleid:string,action:string,rulematchdetails:array<struct<conditiontype:string,sensitivitylevel:string,location:string,matcheddata:array<string>>>,challengeresponse:struct<responsecode:string,solvetimestamp:string>,captcharesponse:struct<responsecode:string,solvetimestamp:string>>>, `requestheadersinserted` array<struct<name:string,value:string>>, `responsecodesent` string, `httprequest` struct<clientip:string,country:string,headers:array<struct<name:string,value:string>>,uri:string,args:string,httpversion:string,httpmethod:string,requestid:string,fragment:string,scheme:string,host:string>, `labels` array<struct<name:string>>, `captcharesponse` struct<responsecode:string,solvetimestamp:string,failurereason:string>, `challengeresponse` struct<responsecode:string,solvetimestamp:string,failurereason:string>, `ja3fingerprint` string, `ja4fingerprint` string, `oversizefields` string, `requestbodysize` int, `requestbodysizeinspectedbywaf` int) PARTITIONED BY ( `log_time` string ) ROW FORMAT SERDE 'org.openx.data.jsonserde.JsonSerDe' STORED AS INPUTFORMAT 'org.apache.hadoop.mapred.TextInputFormat' OUTPUTFORMAT 'org.apache.hadoop.hive.ql.io.HiveIgnoreKeyTextOutputFormat' LOCATION 's3://{WAF TBLPROPERTIES( 'projection.enabled' = 'true', 'projection.log_time.type' = 'date', 'projection.log_time.range' = '2025/11/01/00/00,NOW', 'projection.log_time.format' = 'yyyy/MM/dd/HH/mm', 'projection.log_time.interval' = '1', 'projection.log_time.interval.unit' = 'minutes', 'storage.location.template' = 's3://{WAF log output bucket}/AWSLogs/{account ID}/WAFLogs/cloudfront/{target CF}/${log_time}')

*Please note that if you are applying this to a regional resource such as ALB, this will be the region name, such as /ap-northeast-1/ (for the Tokyo region).

*In this structure, yyyy/MM/dd/HH/mm , but this may need to be changed depending on your S3 hierarchy structure. If it is yyyy/MM/dd/HH you will need to change projection.log_time.interval.unit

About Partition Projection

Partition projection the subject of this article , is a feature that automates partition management to improve query performance.

(^ω^) <Japanese is OK

Don't make that face,

I'm going to explain.

The following is an example of a table creation query.

This query specifies logs from 2025/11/01 to the present.

PARTITIONED BY ( `log_time` string ) ~Omitted~ TBLPROPERTIES( 'projection.enabled' = 'true', 'projection.log_time.type' = 'date', 'projection.log_time.range' = '2025/11/01/00/00,NOW', 'projection.log_time.format' = 'yyyy/MM/dd/HH/mm', 'projection.log_time.interval' = '1', 'projection.log_time.interval.unit' = 'minutes', 'storage.location.template' = 's3://{WAF log output destination bucket}/AWSLogs/{account ID}/WAFLogs/cloudfront/{target CF}/${log_time}')

The log files in an S3 bucket are arranged in a hierarchical structure, such as year/month/day/hour/minute, right? This is called

partitioning Normally, you need to periodically run a command to register the partition locations in the AWS Glue Data Catalog

┌[ ∵]┐ <New data added? Where is it located?

You have to go through this process with Glue every time

By providing Athena with the partition hierarchy in advance, Athena will automatically calculate where the log data will be stored .

This way, newly added partitions will be automatically recognized , eliminating the need to read the AWS Glue Data Catalog every time the range of log data to be analyzed increases.

└[ ∵]┘ <If the data is stored in this hierarchical structure, new logs will come here

It looks like this.

This is called partition projection

How to write

we will explain how to write it, assuming you understand how it actually works

PARTITIONED BY ( `log_time` string ) ~Omitted~ TBLPROPERTIES( 'projection.enabled' = 'true', 'projection.log_time.type' = 'date', 'projection.log_time.range' = '2025/11/01/00/00,NOW', 'projection.log_time.format' = 'yyyy/MM/dd/HH/mm', 'projection.log_time.interval' = '1', 'projection.log_time.interval.unit' = 'minutes', 'storage.location.template' = 's3://{WAF log output destination bucket}/AWSLogs/{account ID}/WAFLogs/cloudfront/{target CF}/${log_time}')

As mentioned earlier, we mainly use two clauses: PARTITIONED and TBLPROPERTIES

PARTITIONED:

Specify the partition key.

Here, we use a key named log_time, but you can set other keys.

If the key overlaps with an existing column name, Athena may get confused and throw an error, so basically set it to a name that does not exist in the original log data.

TBLPROPERTIES:

Specifies whether to enable partition projection and the format details for the specified key.

In this example, we are sorting the information contained in log_time and providing it to Athena.

projection.enabled: Declares "I'm going to use partition projection!"type: Data type (in this case it is a date, so date)range: The range of the data from "beginning" to "end". Using NOW is very useful as it will set the range up to the moment the query is executed.format: The format of the S3 folder name (e.g. yyyy/MM/dd)interval: The interval at which data is added (1 for every minute)storage.location.template: Where to apply the calculated value to the S3 path. ${log_time} is the date calculated by Athena.

Please note that if PARTITIONED and TBLPROPERTIES are different, the following error will occur, so be sure to write them so that they match.

INVALID_TABLE_PROPERTY: Table {{database name}}.{{table name}} is configured for partition projection, but the following partition columns are missing from the projection configuration: [year, month, day, hour]

This query was executed against the '{{database name}}' database unless qualified in the query. Please post the error message in our forum or contact Customer Support with the query ID: XXXX

*At the time, I hadn't studied enough, so I wrote different values and got stuck

Also, in this article, storage.location.template is the path when using CloudFront.

Please note that if a WAF is linked to the LB, the path will be as follows:

LOCATION 's3://{WAF log output destination bucket}/AWSLogs/{account ID}/WAFLogs/ap-northeast-1(region)/{target WAF}/' ~Omitted~ 'storage.location.template' = 's3://{WAF log output destination bucket}/AWSLogs/{account ID}/WAFLogs/${region}/{target WAF}/${log_time}')

Execute the query

This concludes the explanation of creating a table. Finally, let's use the table we created!

Let's scan the data using the log_time of the partition key we specified earlier.

SELECT from_unixtime(timestamp/1000, 'Asia/Tokyo') AS JST, * FROM waf_logs_partition_projection WHERE log_time BETWEEN '2025/11/16/00/00' AND '2025/11/16/00/59';

* If the format is yyyy/MM/dd/HH/ change it to 2025/11/16/00/.

If log data is output here, the setup is complete 🎉🎉

If you don't get any values when executing this, please check the following!

- Is the partition key set correctly?

- Is there an error in the path specified in

storage.location.template

Conclusion

What did you think?

Although there was no problem with the query for log analysis itself, I had a lot of trouble creating the table, so I studied it a bit more and wrote an article about it.

I learned a lot by slowly reading the documentation and writing down the parts I was doing while asking my seniors and the AI for advice on how to do it on my blog!!

I hope this article was helpful to you.

Thank you for your continued support!

reference

An illustration of

partitioning

Using Partition Projection in Amazon Athena.

Creating a table for AWS WAF S3 logs in Athena using Partition Projection.

How to select a partition key.

2

2