[2016] Microsoft's Emotion API Determines the Best Companion at TGS2016 [Review]

table of contents

Hello.

I'm Mandai, the Wild team member in charge of development.

My wife asked me to cut and trim the edges of a pumpkin because she was making simmered pumpkin, so I trimmed the edges for the first time in a long time. I really

need to learn from the delicate techniques of Japanese cuisine.

Today, I wanted to use Microsoft's Emotion API, so I was looking for some material, but the only images I had on hand that seemed suitable were pictures of the booth models from Tokyo Game Show 2016 (TGS2016). So,

I'd like to hold a "2016 Year in Review! TGS2016 Best Booth Model Contest"!

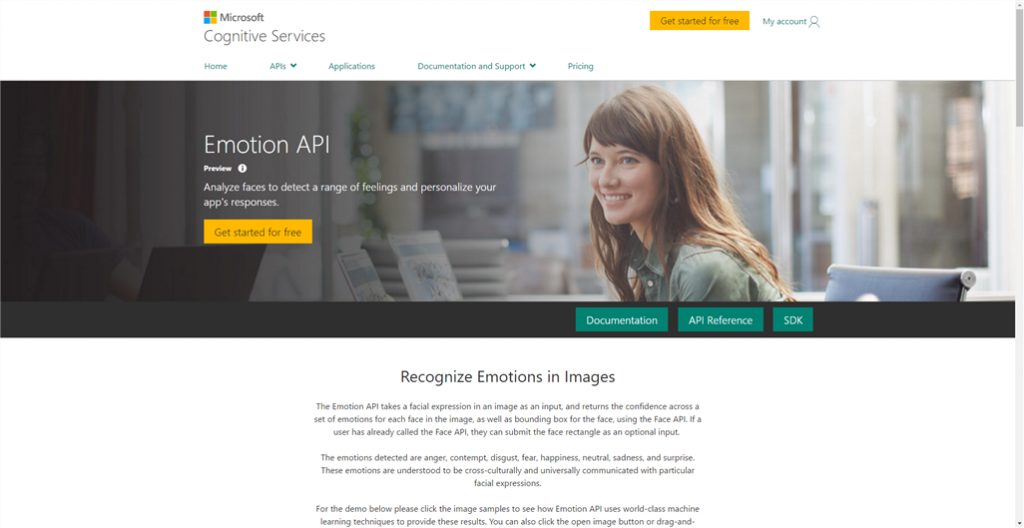

Preparing to use Emotion API with a Microsoft account

To use the Emotion API, you will need an API Key. First, prepare to use the Emotion API from the Microsoft Cognitive Services website

Click the "My Account" link on the top right to go to the login screen

- Microsoft account

- Github account

- LinkedIn account

It seems you can log in using one of these three methods.

I was puzzled as to why LinkedIn was there, but then I remembered that it was acquired by Microsoft.

Once you have successfully logged in, enable the API you want to use by clicking "Get started for free" next to "Sign Out" in the upper right corner

This time, we will enable the Emotion API.

Check the select box where "Product Name" is "Emotion," and also check "I agree to the Microsoft Cognitive Services Terms and Microsoft Privacy Statement" at the bottom.

Then, simply press "Subscribe," and you're ready to go.

The screen will change to show a list of currently enabled APIs

Currently (as of December 27, 2016), the Emotion API is in preview, so it can be used for free up to 30,000 times per month.

The pricing structure is slightly different from GCP's Vision API, but it is free up to 1,000 units per month.

The unit is a bit confusing, but it refers to the analysis items. For example, if you perform face recognition, it's 1 unit; if you perform text recognition, it's another unit; you can obtain data for multiple items from a single image.

To go into more detail, the Vision API is characterized by its greater amount of information.

Even with just face recognition, it can acquire detailed information, including the position of facial features, which may be useful for further processing.

Getting back to the point, once you enable the Emotion API, you need to obtain an API Key.

Since two API Keys become active when you enable the API, you can simply use either one.

The API Key is the one hidden by an "X" in the Keys section of the list, so you can either click Show to copy it, or click the Copy link to copy it

Once you have successfully obtained the API key, your work here is done!

If you want to try it out, curl seems like a good option

If you have a Linux environment, you can quickly try it out using curl

The Emotion API documentation includes an example of sending the URL of a file on the Internet in JSON format, but this time we will try sending a local binary file directly from curl

curl -v -X POST "https://api.projectoxford.ai/emotion/v1.0/recognize" \ -H "Content-Type: application/octet-stream" \ -H "Ocp-Apim-Subscription-Key: [API Key]" \ --data-binary "@[/path/to/image]"

To send the file directly:

- Set Content-Type to "application/octet-stream"

- --data-binary option, image path

Enter the

If you receive a response number 200 and JSON data like the following, it's a success.

(Formatted with line breaks and tabs for readability, but it's actually one line of data.)

[ { "faceRectangle":{ "height":184, "left":223, "top":217, "width":184 }, "scores":{ "anger":2.41070044E-08, "contempt":4.531843E-06, "disgust":7.3893716E-07, "fear":1.44139625E-08, "happiness":0.9999242, "neutral":6.80201556E-05, "sadness":3.14932123E-07, "surprise":2.19046137E-06 } } ]

If you can get this far, you'll have a lot of fun

Rewrite it in PHP

The acquired analytical data can be processed, used to create and display web pages, and many other interesting things can be done with it.

This time, I want to play around with putting the analytical data into a database, so I'll rewrite it in PHP.

That said, it's just a matter of implementing the above command.

$url = 'https://api.projectoxford.ai/emotion/v1.0/recognize'; $subscription_key = 'your api key'; $path = '/path/to/image'; $ch = curl_init($url); curl_setopt_array($ch, [ CURLOPT_HTTPHEADER => [ 'Content-Type: application/octet-stream', 'Ocp-Apim-Subscription-Key: '. $subscription_key, ], CURLOPT_POST => true, CURLOPT_RETURNTRANSFER => true, CURLOPT_HEADER => true, CURLOPT_VERBOSE => true, CURLOPT_POSTFIELDS => file_get_contents($path), ]); $res = curl_exec($ch);

Next, you can create a list of files and loop through them to analyze a large number of images.

However, don't forget that there's a limit of 20 iterations per minute, so you'll need to adjust the timing using sleep or similar functions.

I tried analyzing about 200 images, and the results were good.

Since repeatedly accessing the tool is wasteful due to usage limits, it's best to store the acquired data in a database.

Now that we have all the data, it's time for the review!

I felt like I had created a lot of things and was thinking about wrapping it up, but it turns out that the all-important judging to decide the number one is still left!

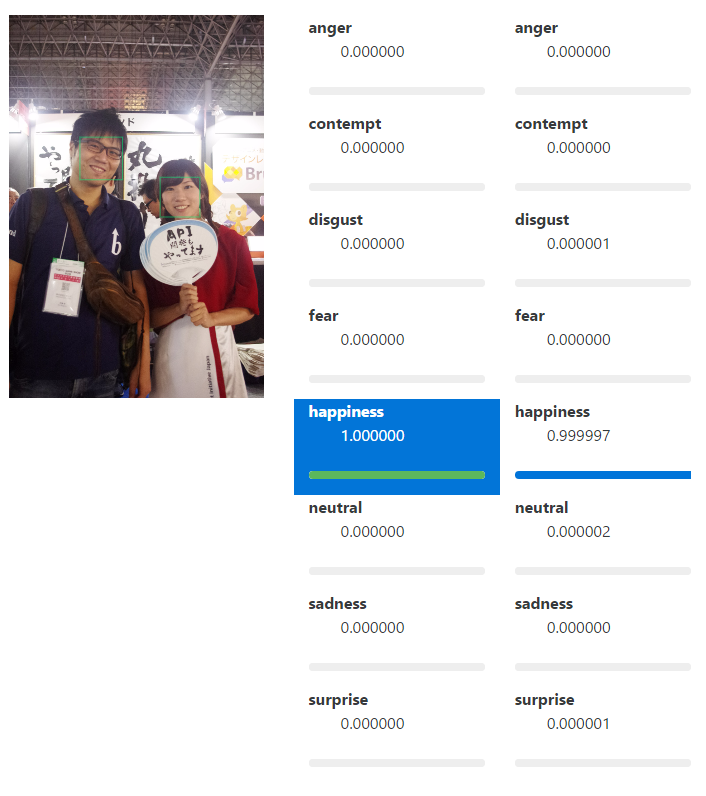

The analyzed data includes information on what emotions are present in each facial expression and to what extent.

The emotions classified are as follows:

- anger

- contempt

- disgust

- fear

- happiness

- neutral

- sadness

- surprise

This classification is a preview version as of December 27, 2016, so it may increase or decrease in the future.

Also, the data format may change to something completely incompatible.

Furthermore, this data is provided in such a way that the sum equals 1.

In other words, you can view each number multiplied by 100 as a percentage, with 100% being the sum.

Of the emotions listed above, "happiness" seems to be the only one that's relevant for this review, so we'll use this data as the basis for our evaluation.

(Although, since the analysis is already complete at this point, it's fair to say the evaluation is finished.)

The shocking result... And then..

Perhaps because of her job she is used to smiling, her happiness score was quite high, making this judging difficult

The reason is that there were 21 pieces of data that only contained happiness!

In other words, "100% Happiness."

I feel so compelled to award all the companions who achieved those scores

However, there was one person who was exceptionally amazing, and appeared in six photos, all of which showed 100% happiness

I'm honored

That's the image!!!

There are five of us, I'm the one in the middle

The Red Ranger from Gorenger

He is like Ikariya Chosuke from The Drifters

Ginyu, from the Ginyu Special Forces

In other words, that's it.

You are meant to be in the center.

That was amazing.

I was deeply moved.

I hope to see you at next year's game show

That's all

Who said there would be a grand prize?

However,

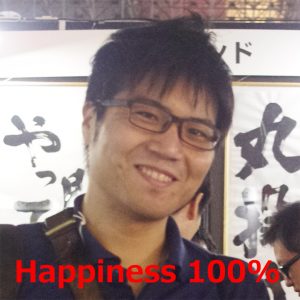

there was one image that, despite all those strong contenders, deeply resonated with me.

That's the image

The score is, of course, 100% Happiness.

Data doesn't lie.

Aren't you someone from Beyond?

However, even if people say I'm favoring my own family, I have no regrets about this decision

I'm wearing a Little Red Riding Hood costume borrowed from IIJ, and this photo was taken before the start of the second day.

I must have been tired after standing all day the day before, but I still have a wonderful smile.

Perhaps that smile helped me in some of my work.

As one of the people involved, I was deeply moved by this result, so I would like to award this image the grand prize

Well, I'm glad it all worked out!

Finally, I would like to close with a close-up of the grand prize winner

Is that you?

That's all

0

0